End-of-Summer Project Review

Post-mortem on Draftpilot, Mailcat, AIPA

Scientists experimenting with beakers, graphs and equations on a whiteboard, Stable Diffusion 1.5

Hello everyone! My co-founder Russ and I have worked on a number of prototypes over the past few months, and I wanted to take some time to share a bit about some of our explorations over the summer, what we learned, and why we changed directions.

Draftpilot, an automated coding agent

-

It's hard to sell engineers on a value prop of “AI codes for you” at a price point that makes financial sense. Github Copilot at $10/month is a really low anchor - Microsoft can afford to lose money hand-over-fist but we can’t.

-

It’s hard to build a general-purpose coding agent with GPT-4 that you can instruct with natural language, edits code reliably and fails gracefully (at least as of May 2023). Draftpilot had multiple types of agents capable of reading code, doing research, editing files in-place, and running tests, and it failed a lot.

-

That said, it worked about half of the time, and it feels truly magical when it works. It feels like a superpower to get stuff done from your smartphone while in bed.

-

With code writing, failure is very hard to recover from - if it’s written the wrong code or put it in the wrong place, trying to use natural language to fix it is awkward and difficult. I found I just wanted to edit it myself.

-

I tried multiple form factors for the UX - they all had pros and cons:

Command line utility

- good: can be interactive, very easy to write, easy for a new user to get started by just running a script, for those already comfortable with git on the CLI it’s very easy to stage and revert files to recover from issues

- bad: works on your local copy so you can’t do something else while it’s working. if a solution partially works, it’s not easy to keep some changes but not others

Local machine browser experience

- good: interactive and visual, you can pick which changes to keep, you can browse and select files, the overall editing experience was pretty nice. you can have it “propose changes” so it doesn’t actually edit your files until you accept

- bad: you have to switch between browser, IDE and command line to get stuff done. can’t really use it when away from your computer

Hosted web service

- good: anyone can use it, including non-technical people. very easy to get started by hooking up to github. puts you in the mode of code review, which is very natural on github

- bad: making changes yourself is really slow, as you have to pull down the remote branch. so then you try to get the bot to fix the issues, which is cumbersome because you have to write a bunch of github comments

In-IDE assistant

- good: right at your fingertips, very fast to recover from failures

- bad: i would want to just make the change myself because I’m right there in the IDE and it’s slower to ask the AI

In truth, it was the lack of a killer form-factor that was the proximate reason for me to abandon the project - everything was slightly cumbersome, all due to the fact that the generations wouldn’t be perfect the first time and many required some editing

I believe the economics hurdle can be overcome if the product is sufficiently magical, but alas, so far I haven't seen it here or anywhere.

MailCat, a system for categorizing emails and extracting next actions

Our goal with Mailcat was to turn your email inbox into an actual todo list - pulling out action items from your emails, grouping emails that could be processed in bulk, and helping draft responses. We built a prototype that could do all of those things, but decided not to go further.

-

GPT-4 would get confused on any email that had multiple parts (e.g. person A replies to B) - when pulling out action items, it would consistently have issues with pronoun interpretation. I think this is a fundamental challenge with transformer-based models - a way to solve this might be to pre-process emails and rewrite all sentences in the third person first.

-

Categorizing emails to operate in batch felt magical. Being able to group all cold sales emails together and delete them all at once was fantastic, and only possible with powerful categorization. "calendar invite", "newsletter", it just felt really nice to see my inbox in this way.

-

Extracting next action from an email is a tricky task - I didn’t feel it was that accurate at guessing what to do next with an email

-

Auto-composing responses was really handy, but the tone was hard to nail - GPT has a certain voice by default. if people could tell an AI wrote the email, it was unclear if the human ever read it at all, and we weren't sure how to properly convey the fact that the human did indeed read the email, but GPT drafted the response.

-

Most importantly, email seems to be a graveyard of failed products. We did a big search of email productivity products, and even though everyone spends a ton of their day on email, it doesn't seem like most people are willing to pay - Superhuman isn't exactly killing it out there.

AIPA, project management for humans

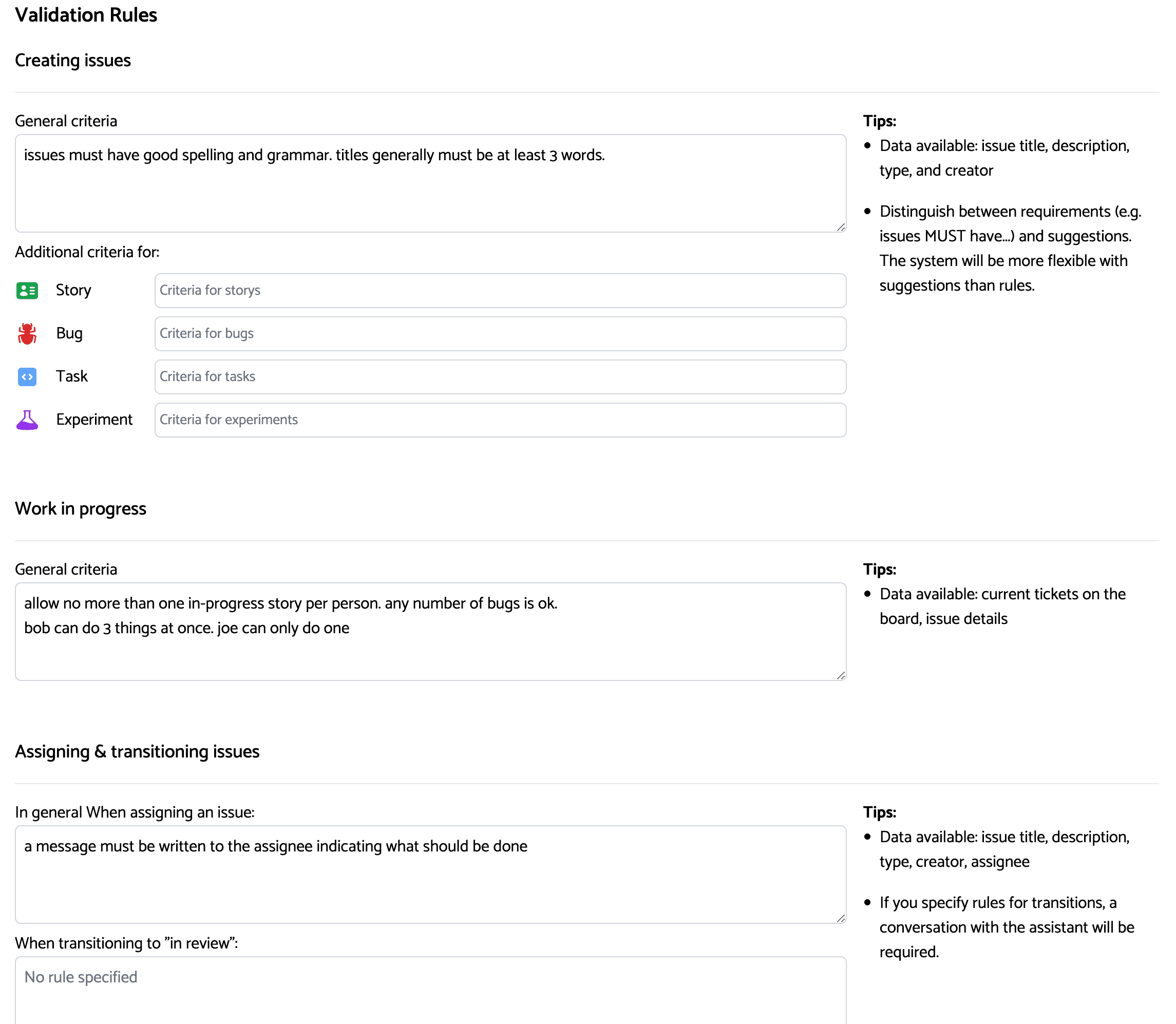

Prototype "issue rules" interface

AIPA (AI Project Assistant) was designed to enforce arbitrary rules in projects to improve project hygeine. It was conceived not to replace tools like JIRA or Linear, but to do the work of a project manager on top of those products, making sure processes were being followed and ensuring good team communication on tickets.

We also explored an idea of a bug assistant that would help teams do bug triage, follow-up with users on reproduction steps and when bugs were fixed, and distribute the work of fixing bugs more fairly across engineering teams.

We didn't get that far - after interviewing a few teams, it seemed difficult to deliver a 10x better experience in any dimension, and that the primary challenges were of an organizational and cultural nature.

It may be fair to say that good teams don't struggle too badly with these problems, and the teams that do often have bigger problems.

We did one more project in August, but that'll be a whole post on it's own I think. Don't worry - I'll share more about what we're working on too. Cheers!